Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Report 2026

Global Outlook – By Component (Hardware, Software, Services), By Deployment Mode (On-Premises, Cloud), By Application (Data Centers, High-Performance Computing, Cloud Artificial Intelligence (AI), Edge Artificial Intelligence (AI), Enterprise Artificial Intelligence (AI), Other Applications), By End-User (Banking, Financial Services, and Insurance (BFSI), Healthcare, Information Technology (IT) And Telecommunications, Manufacturing, Retail, Government, Other End-Users) – Market Size, Trends, Strategies, and Forecast to 2035

Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Overview

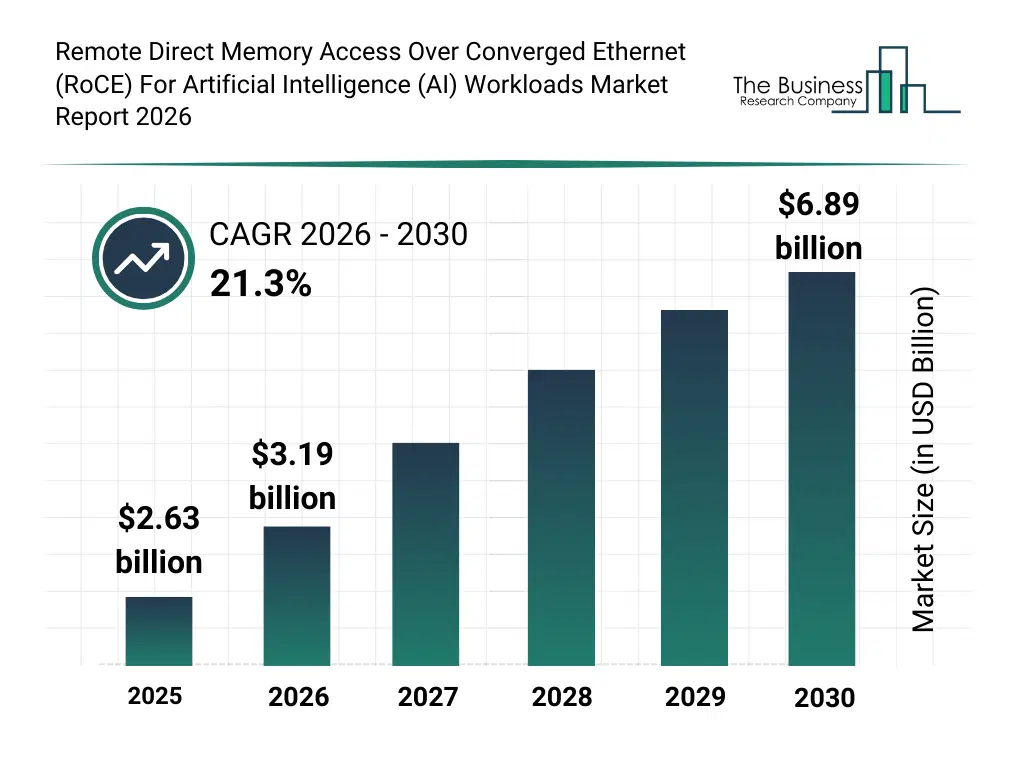

• Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads market size has reached to $2.63 billion in 2025 • Expected to grow to $6.89 billion in 2030 at a compound annual growth rate (CAGR) of 21.3% • Growth Driver: The Rising Adoption Of Ethernet-Based Alternatives To InfiniBand Is Driving The Market Due To Scalability • Market Trend: Ethernet-Based RDMA Redefines Hyperscale AI Networking Performance at Zettascale • North America was the largest region in 2025 and Asia-Pacific is the fastest growing region.What Is Covered Under Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market?

Remote direct memory access over converged ethernet (RoCE) for artificial intelligence (AI) workloads refers to the use of RoCE technology to enable high-speed, low-latency memory-to-memory data transfers across servers and storage systems in AI computing environments. Its primary purpose is to accelerate AI training and inference by reducing CPU overhead, minimizing data transfer latency, and improving bandwidth efficiency for large datasets. The main components of remote direct memory access over converged ethernet for artificial intelligence workloads include hardware, software, and services. Hardware refers to network adapters and acceleration devices that enable high-speed, low-latency memory access across servers to optimize artificial intelligence workloads. These solutions are deployed through on-premises and cloud models depending on infrastructure and organizational requirements. The various applications involved are data centers, high-performance computing, cloud artificial intelligence, edge artificial intelligence, enterprise artificial intelligence, and other applications and tehy are used by several end users such as banking, financial services, and insurance companies, healthcare providers, information technology and telecommunications companies, manufacturing companies, retail organizations, government organizations, and others.

What Is The Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Size and Share 2026?

The remote direct memory access over converged ethernet (roce) for artificial intelligence (AI) workloads market size has grown exponentially in recent years. It will grow from $2.63 billion in 2025 to $3.19 billion in 2026 at a compound annual growth rate (CAGR) of 21.0%. The growth in the historic period can be attributed to growth in data center expansion, rise in big data processing requirements, increasing adoption of high performance computing clusters, early deployment of ethernet based networking solutions, growth in enterprise cloud infrastructure.What Is The Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Growth Forecast?

The remote direct memory access over converged ethernet (roce) for artificial intelligence (AI) workloads market size is expected to see exponential growth in the next few years. It will grow to $6.89 billion in 2030 at a compound annual growth rate (CAGR) of 21.3%. The growth in the forecast period can be attributed to growing artificial intelligence model complexity, expansion of hyperscale ai data centers, rising demand for distributed training frameworks, increasing investment in gpu and accelerator hardware, growth in real time analytics and inference workloads. Major trends in the forecast period include increasing deployment of high bandwidth ethernet interconnects, rising adoption of low latency distributed ai training architectures, expansion of gpu cluster networking optimization solutions, growing integration of traffic management and congestion control software, enhancement of scalable data center networking infrastructure.Global Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Segmentation

1) By Component: Hardware, Software, Services 2) By Deployment Mode: On-Premises, Cloud 3) By Application: Data Centers, High-Performance Computing, Cloud Artificial Intelligence (AI), Edge Artificial Intelligence (AI), Enterprise Artificial Intelligence (AI), Other Applications 4) By End-User: Banking, Financial Services, and Insurance (BFSI), Healthcare, Information Technology (IT) And Telecommunications, Manufacturing, Retail, Government, Other End-Users Subsegments: 1) By Hardware: Network Interface Cards, Ethernet Switches, Cables And Connectors, Data Center Servers, High Performance Storage Systems 2) By Software: Network Management Software, Traffic Optimization Software, Latency Monitoring Software, Workload Orchestration Software, Performance Analytics Software 3) By Services: Consulting Services, Deployment And Integration Services, Network Optimization Services, Maintenance And Support Services, Training And Advisory ServicesWhat Is The Driver Of The Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market?

The rising adoption of Ethernet-based alternatives to InfiniBand is expected to propel the growth of the RoCE for AI workloads market going forward. Ethernet-based alternatives to InfiniBand include technologies such as RDMA over Converged Ethernet (RoCE), which enable remote direct memory access capabilities over standard Ethernet networks deployed in AI data centers. The adoption of these alternatives is increasing as hyperscalers and cloud providers seek cost-efficient, scalable networking solutions that are compatible with existing Ethernet infrastructure. RoCE for AI workloads supports this shift by enabling low-latency, high-throughput GPU-to-GPU communication and efficient distributed training across Ethernet fabrics. For instance, in June 2025, according to Vitex LLC, a US-based dynamic fiber optic solutions provider, hyperscale cloud providers are making unprecedented capital investments in AI infrastructure. In 2025, Microsoft allocated approximately $80 billion, Amazon committed $86 billion (as part of a broader $100 billion investment plan), Google invested $75 billion, and Meta spent $65 billion in capital expenditures, bringing the combined total from leading technology firms to over $450 billion. Therefore, the rising adoption of Ethernet-based alternatives to InfiniBand is driving the growth of the RoCE for AI workloads market.Key Players In The Global Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market

Major companies operating in the remote direct memory access over converged ethernet (roce) for artificial intelligence (AI) workloads market are Huawei Technologies Co. Ltd., Dell Technologies Inc., IBM Corporation, NVIDIA Corporation, Cisco Systems Inc., Lenovo Group Limited, Intel Corporation, Oracle Corporation, Broadcom Inc., Quanta Computer Inc., Hewlett Packard Enterprise Company, NEC Corporation, ASUSTeK Computer Inc., Super Micro Computer Inc., NetApp Inc., Arista Networks Inc., Marvell Technology Inc., Synopsys Inc., Pure Storage Inc., Extreme Networks Inc., Napatech A/S, Mitac Computing Technology Corporation, Aviz Networks Inc., Pica8 Inc.Global Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Trends and Insights

Major companies operating in the RoCE for AI workloads market are focusing on developing innovative advancements such as AI network fabrics to support large-scale, low-latency, and cost-efficient distributed AI training. An AI network fabric is a high-performance interconnect system that enables scalable GPU communication using Ethernet-based RDMA technologies. For instance, in October 2025, Oracle Corporation, a US-based cloud infrastructure and enterprise software provider, announced the OCI Zettascale10 Supercluster with Oracle Acceleron RoCE. The solution integrates up to 800,000 NVIDIA graphics processing units across multiple data centers using a flatter, Ethernet-based RoCE topology designed to reduce latency, improve resiliency, and enhance performance predictability. By combining line-rate encryption, network interface card–level security enforcement, and multicloud deployment flexibility, the platform demonstrates that RoCE can support so-called zettascale-class artificial intelligence workloads with performance comparable to leading InfiniBand-based architectures for targeted AI training applications.What Are Latest Mergers And Acquisitions In The Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market?

In September 2025, NVIDIA Corporation, a US-based technology company, acquired Enfabrica for over $900 million. With this acquisition, NVIDIA aims to enhance its Ethernet-based AI networking and data-movement capabilities by integrating advanced SuperNIC and memory fabric technologies to improve the scalability, resiliency, and efficiency of large-scale AI training clusters. Enfabrica is a US-based provider of high-performance AI interconnect silicon, offering SuperNICs, multipath fabric architectures, and disaggregated memory networking solutions designed to reduce congestion and optimize distributed artificial intelligence workloads.Regional Outlook

North America was the largest region in the RoCE for AI workloads market in 2025. Asia-Pacific is expected to be the fastest-growing region in the forecast period. The regions covered in this market report are Asia-Pacific, South East Asia, Western Europe, Eastern Europe, North America, South America, Middle East, Africa. The countries covered in this market report are Australia, Brazil, China, France, Germany, India, Indonesia, Japan, Taiwan, Russia, South Korea, UK, USA, Canada, Italy, Spain.What Defines the Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market?

The remote direct memory access over converged ethernet (RoCE) for artificial intelligence (AI) workloads market consists of revenues earned by entities by providing services such as low-latency data transfer, high-throughput networking, congestion control and traffic management, and scalable distributed training support. The market value includes the value of related goods sold by the service provider or included within the service offering. The remote direct memory access over converged ethernet (RoCE) for artificial intelligence (AI) workloads market includes sales of high-speed Ethernet adapters, AI cluster interconnects, RDMA networking switches, data center network solutions, and high-performance GPU networking modules. Values in this market are ‘factory gate’ values, that is, the value of goods sold by the manufacturers or creators of the goods, whether to other entities (including downstream manufacturers, wholesalers, distributors, and retailers) or directly to end customers. The value of goods in this market includes related services sold by the creators of the goods.How is Market Value Defined and Measured?

The market value is defined as the revenues that enterprises gain from the sale of goods and/or services within the specified market and geography through sales, grants, or donations in terms of the currency (in USD unless otherwise specified). The revenues for a specified geography are consumption values that are revenues generated by organizations in the specified geography within the market, irrespective of where they are produced. It does not include revenues from resales along the supply chain, either further along the supply chain or as part of other products.What Key Data and Analysis Are Included in the Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Report 2026?

The remote direct memory access over converged ethernet (roce) for artificial intelligence (ai) workloads market research report is one of a series of new reports from The Business Research Company that provides market statistics, including industry global market size, regional shares, competitors with the market share, detailed market segments, market trends and opportunities, and any further data you may need to thrive in the remote direct memory access over converged ethernet (roce) for artificial intelligence (ai) workloads industry. The market research report delivers a complete perspective of everything you need, with an in-depth analysis of the current and future state of the industry.Remote Direct Memory Access Over Converged Ethernet (RoCE) For Artificial Intelligence (AI) Workloads Market Report Forecast Analysis

| Report Attribute | Details |

|---|---|

| Market Size Value In 2026 | $3.19 billion |

| Revenue Forecast In 2035 | $6.89 billion |

| Growth Rate | CAGR of 21.0% from 2026 to 2035 |

| Base Year For Estimation | 2025 |

| Actual Estimates/Historical Data | 2020-2025 |

| Forecast Period | 2026 - 2030 - 2035 |

| Market Representation | Revenue in USD Billion and CAGR from 2026 to 2035 |

| Segments Covered | Component, Deployment Mode, Application, End-User |

| Regional Scope | Asia-Pacific, Western Europe, Eastern Europe, North America, South America, Middle East, Africa |

| Country Scope | The countries covered in the report are Australia, Brazil, China, France, Germany, India, ... |

| Key Companies Profiled | Huawei Technologies Co. Ltd., Dell Technologies Inc., IBM Corporation, NVIDIA Corporation, Cisco Systems Inc., Lenovo Group Limited, Intel Corporation, Oracle Corporation, Broadcom Inc., Quanta Computer Inc., Hewlett Packard Enterprise Company, NEC Corporation, ASUSTeK Computer Inc., Super Micro Computer Inc., NetApp Inc., Arista Networks Inc., Marvell Technology Inc., Synopsys Inc., Pure Storage Inc., Extreme Networks Inc., Napatech A/S, Mitac Computing Technology Corporation, Aviz Networks Inc., Pica8 Inc. |

| Customization Scope | Request for Customization |

| Pricing And Purchase Options | Explore Purchase Options |