Single-Modal Affective Computing Market Report 2026

Global Outlook – By Component (Hardware, Software), By Technology (Facial Recognition, Voice Recognition, Gesture Recognition, Textual Emotion Analysis), By Application (Healthcare, Education, Gaming, Automotive, Retail), By End-User (Individual Consumers, Enterprises) – Market Size, Trends, Strategies, and Forecast to 2035

Single-Modal Affective Computing Market Overview

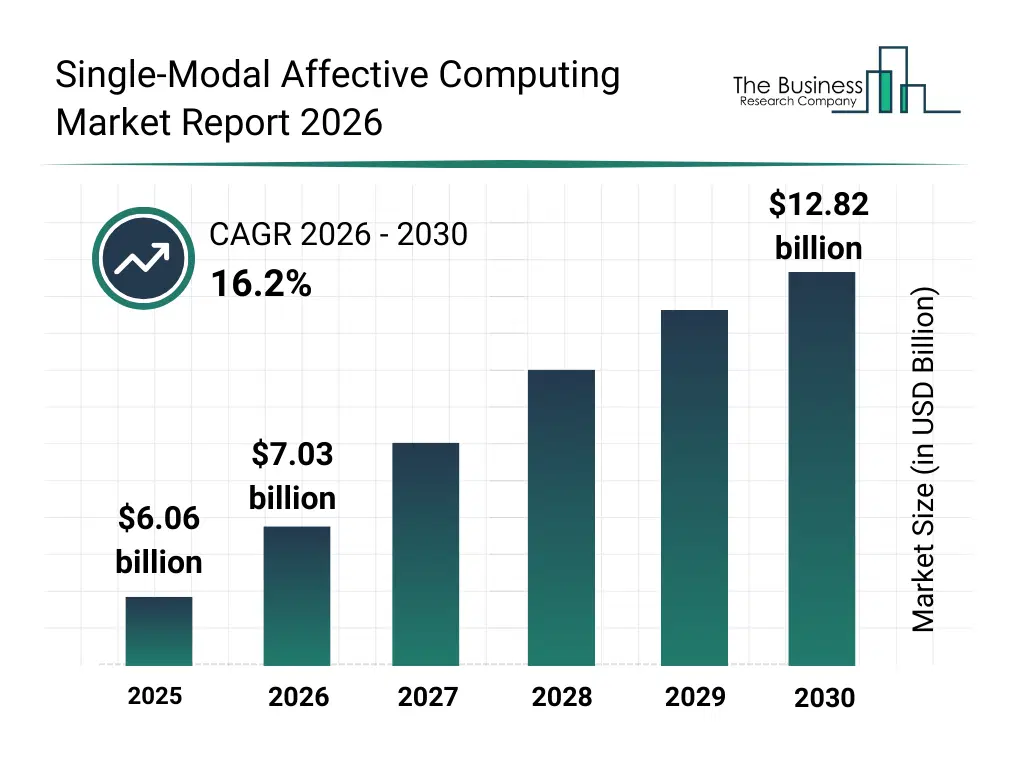

• Single-Modal Affective Computing market size has reached to $6.06 billion in 2025 • Expected to grow to $12.82 billion in 2030 at a compound annual growth rate (CAGR) of 16.2% • Growth Driver: Single-Modal Affective Computing Driving Market Growth Due To Growing Interest In Mental Health Monitoring Solutions • Market Trend: Advancements In Prosody-Driven Edge-Based Single-Modal Affective Computing • North America was the largest region in 2025 and Asia-Pacific is the fastest growing region.What Is Covered Under Single-Modal Affective Computing Market?

Single-modal affective computing is a branch of artificial intelligence that detects, interprets, and responds to human emotions using data from only one modality, such as facial expressions, speech signals, text, or physiological signals. It relies on analyzing emotion-related cues within that single data source to infer affective states. It enables emotion-aware systems where only one type of input data is available or practical. The main components of single-modal affective computing include hardware and software. Hardware refers to devices such as cameras, microphones, and sensors used to capture physiological and behavioral data for emotion recognition. These solutions leverage technologies such as facial recognition, voice recognition, gesture recognition, and textual emotion analysis. The various applications involved are healthcare, education, gaming, automotive, and retail and they are used by several end users such as individual consumers and enterprises.

What Is The Single-Modal Affective Computing Market Size and Share 2026?

The single-modal affective computing market size has grown rapidly in recent years. It will grow from $6.06 billion in 2025 to $7.03 billion in 2026 at a compound annual growth rate (CAGR) of 16.0%. The growth in the historic period can be attributed to growth in human computer interaction research, rise in AI based analytics, expansion of digital customer experience tools, increased voice assistant usage, demand for sentiment analysis.What Is The Single-Modal Affective Computing Market Growth Forecast?

The single-modal affective computing market size is expected to see rapid growth in the next few years. It will grow to $12.82 billion in 2030 at a compound annual growth rate (CAGR) of 16.2%. The growth in the forecast period can be attributed to adoption in mental health screening, expansion in smart devices, rising use in customer service automation, growth in emotion aware interfaces, integration into interactive media systems. Major trends in the forecast period include voice based emotion recognition engines, facial expression analysis software, text sentiment detection platforms, real time emotion monitoring tools, edge based affective AI processing.Global Single-Modal Affective Computing Market Segmentation

1) By Component: Hardware; Software 2) By Technology: Facial Recognition; Voice Recognition; Gesture Recognition; Textual Emotion Analysis 3) By Application: Healthcare; Education; Gaming; Automotive; Retail 4) By End-User: Individual Consumers; Enterprises Subsegments: 1) By Hardware: Cameras; Microphones; Physiological Sensors; Wearable Devices; Processing Units 2) By Software: Emotion Recognition Software; Facial Expression Analysis Software; Speech And Voice Analysis Software; Text Sentiment Analysis Software; Data Analytics And Visualization SoftwareWhat Is The Driver Of The Single-Modal Affective Computing Market?

The growing interest in mental health monitoring solutions is expected to propel the growth of the single-modal affective computing market going forward. Mental health monitoring solutions refer to technologies and systems that monitor psychological well-being by collecting and analyzing data and helping to detect early signs of mental health issues. The rise of mental health monitoring solutions is driven by people seeking greater awareness and better management of their mental well-being. Single-modal affective computing supports mental health monitoring solutions by enabling the detection of emotional states using a single type of data to monitor mental well-being, thereby aiding in mental health assessment and monitoring. For instance, in January 2024, according to a survey published in the Journal of Medical Internet Research, a Canada-based peer-reviewed open-access journal, patients with mental health conditions reported using or being willing to use digital self-monitoring tools in 2024 compared with around 66% in 2023, showing a clear increase in patient interest and acceptance as technology and provider adoption expanded. Therefore, the growing interest in mental health monitoring solutions is driving the growth of single-modal affective computing industry.Key Players In The Global Single-Modal Affective Computing Market

Major companies operating in the single-modal affective computing market are Cogito Health Analytics Ltd., Empatica Inc., Noldus Information Technology B.V., Hume AI Inc., Beyond Verbal Ltd., iMotions A/S, VoiceSense Ltd., Realeyes Limited, Sentiance NV, Paravision Inc., Aural Analytics Inc., NVISO AG, Eyeris Technologies Inc., Ellipsis Health Inc., Behavioural Signals Ltd., Sonde Health Inc., BioBeats Ltd., Emteq Labs Ltd., Emoshape Ltd., Wluper Ltd., Vocalis Health Ltd., CrowdEmotion Ltd., and AffectLab Ltd.Global Single-Modal Affective Computing Market Trends and Insights

Major companies operating in the single-modal affective computing market are focusing on innovations in wearable and edge-based devices for real-time emotion monitoring, such as prosody-driven emotion recognition, to gain competitive differentiation through low-latency inference, privacy-preserving deployment, and more natural human–AI interactions. Prosody-driven emotion recognition refers to the process of detecting and interpreting human emotional states using only vocal characteristics, rather than the spoken words themselves. For instance, in April 2023, Hume AI, a US-based company, introduced Hume’s Empathic Voice Interface (EVI) API. Hume’s Empathic Voice Interface (EVI) API offers a real-time, voice-only affective computing platform that enables emotionally intelligent conversations by analyzing users’ vocal prosody, including tone, rhythm, and timbre. It leverages an empathic large language model (eLLM) to detect emotional cues and respond with contextually appropriate timing, language, and vocal expression. The API supports low-latency, bidirectional audio streaming, allowing natural turn-taking and seamless interruption handling. By dynamically modulating its own speech characteristics, EVI produces responses that sound attentive, empathetic, and human-like.What Are Latest Mergers And Acquisitions In The Single-Modal Affective Computing Market?

In August 2025, Meta Platforms Inc., a US-based technology company specializing in social media, artificial intelligence, and immersive technologies, acquired WaveForms, Inc. for an undisclosed amount. The acquisition integrates emotion-sensitive speech recognition and synthesis technologies into Meta’s platforms, particularly within its Superintelligence Labs, to enable more natural and empathetic human–AI interactions. WaveForms Inc. is a US-based AI audio startup specializing in emotion-aware speech technologies that produce human-like, emotionally expressive voices.Regional Insights

North America was the largest region in the single-modal affective computing market in 2025. Asia-Pacific is expected to be the fastest-growing region in the forecast period. The regions covered in this market report are Asia-Pacific, South East Asia, Western Europe, Eastern Europe, North America, South America, Middle East, Africa. The countries covered in this market report are Australia, Brazil, China, France, Germany, India, Indonesia, Japan, Taiwan, Russia, South Korea, UK, USA, Canada, Italy, Spain.What Defines the Single-Modal Affective Computing Market?

The single-modal affective computing market consists of revenues earned by entities by providing services such as voice emotion recognition, text-based sentiment analysis, emotion detection from images or audio, affective data labeling, real-time emotion monitoring, and behavioral analytics. The market value includes the value of related goods sold by the service provider or included within the service offering. The single-modal affective computing market includes sales of emotion recognition engines, facial analysis tools, speech emotion analysis software, and sentiment analysis platforms. Values in this market are ‘factory gate’ values, that is, the value of goods sold by the manufacturers or creators of the goods, whether to other entities (including downstream manufacturers, wholesalers, distributors, and retailers) or directly to end customers. The value of goods in this market includes related services sold by the creators of the goods.How is Market Value Defined and Measured?

The market value is defined as the revenues that enterprises gain from the sale of goods and/or services within the specified market and geography through sales, grants, or donations in terms of the currency (in USD unless otherwise specified). The revenues for a specified geography are consumption values that are revenues generated by organizations in the specified geography within the market, irrespective of where they are produced. It does not include revenues from resales along the supply chain, either further along the supply chain or as part of other products.What Key Data and Analysis Are Included in the Single-Modal Affective Computing Market Report 2026?

The single-modal affective computing market research report is one of a series of new reports from The Business Research Company that provides market statistics, including industry global market size, regional shares, competitors with the market share, detailed market segments, market trends and opportunities, and any further data you may need to thrive in the single-modal affective computing industry. The market research report delivers a complete perspective of everything you need, with an in-depth analysis of the current and future state of the industry.Single-Modal Affective Computing Market Report Forecast Analysis

| Report Attribute | Details |

|---|---|

| Market Size Value In 2026 | $7.03 billion |

| Revenue Forecast In 2035 | $12.82 billion |

| Growth Rate | CAGR of 16.0% from 2026 to 2035 |

| Base Year For Estimation | 2025 |

| Actual Estimates/Historical Data | 2020-2025 |

| Forecast Period | 2026 - 2030 - 2035 |

| Market Representation | Revenue in USD Billion and CAGR from 2026 to 2035 |

| Segments Covered | Component, Technology, Application, End-User |

| Regional Scope | Asia-Pacific, Western Europe, Eastern Europe, North America, South America, Middle East, Africa |

| Country Scope | The countries covered in the report are Australia, Brazil, China, France, Germany, India, ... |

| Key Companies Profiled | Cogito Health Analytics Ltd., Empatica Inc., Noldus Information Technology B.V., Hume AI Inc., Beyond Verbal Ltd., iMotions A/S, VoiceSense Ltd., Realeyes Limited, Sentiance NV, Paravision Inc., Aural Analytics Inc., NVISO AG, Eyeris Technologies Inc., Ellipsis Health Inc., Behavioural Signals Ltd., Sonde Health Inc., BioBeats Ltd., Emteq Labs Ltd., Emoshape Ltd., Wluper Ltd., Vocalis Health Ltd., CrowdEmotion Ltd., and AffectLab Ltd. |

| Customization Scope | Request for Customization |

| Pricing And Purchase Options | Explore Purchase Options |