Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Report 2026

Global Outlook – By Component (Hardware, Software, Services), By Deployment Mode (On-Premises, Cloud), By Enterprise Size (Small And Medium Enterprises, Large Enterprises), By Application (Model Training, Inference, Research, Enterprise Solutions, Other Applications), By End-User (Banking, Financial Services, And Insurance (BFSI), Healthcare, Information Technology (IT) And Telecommunications, Media And Entertainment, Research Institutes, Other End-Users) – Market Size, Trends, Strategies, and Forecast to 2035

Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Overview

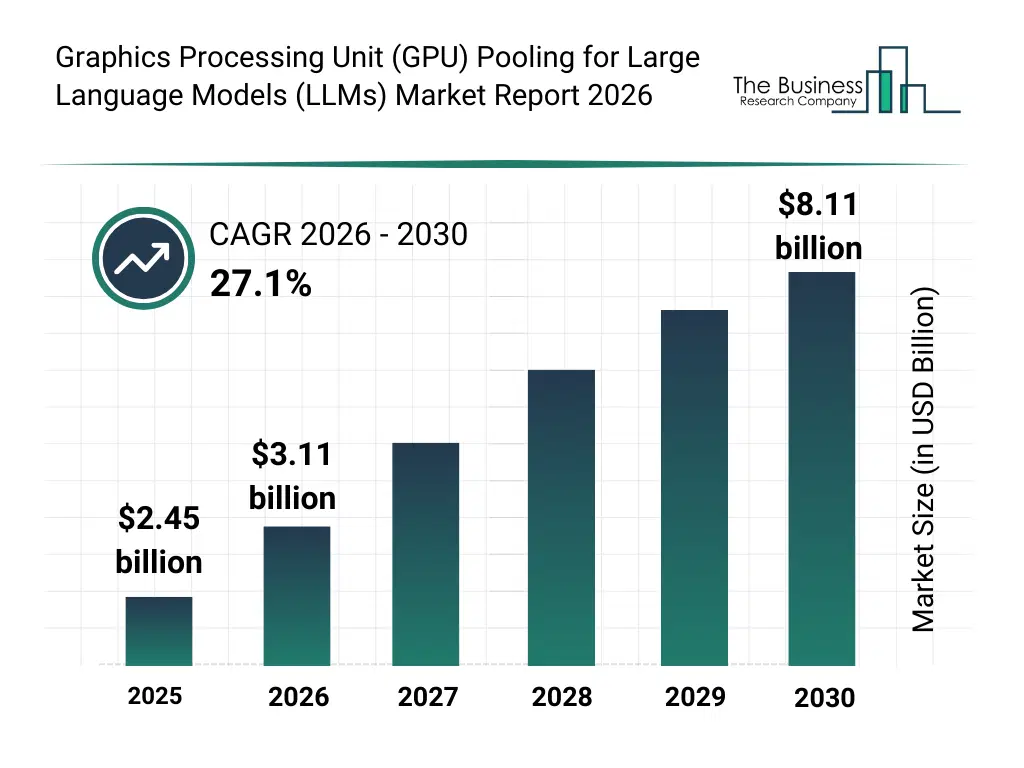

• Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) market size has reached to $2.45 billion in 2025 • Expected to grow to $8.11 billion in 2030 at a compound annual growth rate (CAGR) of 27.1% • Growth Driver: Rising GPU Scarcity Is Fueling The Growth Of The Market Due To AI Demand Outpacing Supply • Market Trend: GPU Resource Virtualization Is Driving Efficient Multi-Model LLM Deployment • North America was the largest region in 2025 and Asia-Pacific is the fastest growing region.What Is Covered Under Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market?

The graphics processing unit (GPU) pooling for large language models (LLMs) refers to the practice of aggregating multiple graphics processing unit (GPU) into a shared resource pool to efficiently serve Large Language Model (LLM) inference or training workloads. Instead of dedicating a single Ggraphics processing unit (GPU) to one task, graphics processing unit (GPU) pooling allows dynamic allocation of graphics processing unit (GPU) memory and compute across multiple large language model (LLM) requests or models, improving utilization, reducing idle resources, and lowering overall infrastructure costs. The main components of graphics processing unit (GPU) pooling for large language models (LLMs) include hardware, software, and services. Hardware refers to shared GPU infrastructure that enables multiple LLM workloads to access pooled computing resources dynamically, improving utilization, scalability, and cost efficiency. The solutions are deployed through on-premises and cloud modes. The GPU pooling solutions for LLMs are adopted by small and medium enterprises and large enterprises. The various applications involved are model training, inference, research, enterprise solutions, and other applications. The end users of GPU pooling for LLM solutions include banking, financial services, and insurance (BFSI), healthcare, information technology and telecommunications, media and entertainment, research institutes, and other end users.

What Is The Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Size and Share 2026?

The graphics processing unit (gpu) pooling for large language models (llms) market size has grown exponentially in recent years. It will grow from $2.45 billion in 2025 to $3.11 billion in 2026 at a compound annual growth rate (CAGR) of 26.8%. The growth in the historic period can be attributed to growth in large language model development, expansion of cloud-based AI infrastructure, increasing gpu utilization inefficiencies, rising demand for scalable AI compute, availability of high-performance gpus.What Is The Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Growth Forecast?

The graphics processing unit (gpu) pooling for large language models (llms) market size is expected to see exponential growth in the next few years. It will grow to $8.11 billion in 2030 at a compound annual growth rate (CAGR) of 27.1%. The growth in the forecast period can be attributed to increasing adoption of generative AI applications, rising investments in AI data centers, growing focus on energy-efficient compute utilization, expansion of enterprise AI deployment, advancements in gpu virtualization technologies. Major trends in the forecast period include increasing adoption of dynamic gpu resource allocation, rising demand for on-demand gpu pooling services, growing use of multi-tenant gpu architectures, expansion of performance optimization and monitoring tools, enhanced focus on cost-efficient AI infrastructure.Global Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Segmentation

1) By Component: Hardware; Software; Services 2) By Deployment Mode: On-Premises; Cloud 3) By Enterprise Size: Small And Medium Enterprises; Large Enterprises 4) By Application: Model Training; Inference; Research; Enterprise Solutions; Other Applications 5) By End-User: Banking, Financial Services, And Insurance (BFSI); Healthcare; Information Technology (IT) And Telecommunications; Media And Entertainment; Research Institutes; Other End-Users Subsegments: 1) By Hardware: High Performance Graphics Processors; Data Center Servers; High Speed Interconnect Systems; Storage And Memory Systems; Power And Cooling Infrastructure 2) By Software: Resource Management Software; Workload Scheduling Software; Performance Monitoring Software; Virtualization And Orchestration Software; Usage Analytics And Reporting Software 3) By Services: Consulting Services; Deployment And Integration Services; Resource Optimization Services; Maintenance And Support Services; Training And Advisory ServicesWhat Is The Driver Of The Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market?

The rising graphics processing unit (GPU) scarcity is expected to propel the growth of the graphics processing unit (GPU) pooling for large language models (LLMs) market going forward. Graphics Processing Unit (GPU) scarcity refers to the shortage or limited availability of graphics processing units (GPUs) compared to the demand for them, especially for high-performance tasks and scientific computing. The rise of graphics processing unit (GPU) scarcity is due to people widely adopting artificial intelligence (AI) and data-intensive technologies that require massive graphics processing unit (GPU) resources, with limited manufacturing capacity and complex semiconductor supply chains further contributing to scarcity. Graphics processing unit (GPU) pooling for large language models (LLMs) helps address GPU scarcity by creating a virtualized pool of GPU resources that can be dynamically allocated to multiple models and users. For instance, in June 2024, according to HPCWire, a US-based company, Nvidia experienced explosive growth in data-center GPU shipments in 2023, totaling approximately 3.76 million units, according to a study by semiconductor analyst firm TechInsights. This represented an increase of more than 1 million units compared to 2022, when Nvidia’s data-center GPU shipments stood at 2.64 million units. Therefore, the rising graphics processing unit (GPU) scarcity is driving the growth of graphics processing unit (GPU) pooling for large language models (LLMs) industry.Key Players In The Global Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market

Major companies operating in the graphics processing unit (gpu) pooling for large language models (llms) market are Microsoft Corporation, Amazon Web Services Inc., International Business Machines Corporation, Oracle Corporation, CoreWeave Inc., DigitalOcean Inc., Cyfuture AI, NVIDIA Corporation, Vast.ai, GMI Cloud, Nebius Group N.V., Salad Technologies Inc., Vultr Holdings LLC, Hivenet, AceCloud Hosting Pvt. Ltd., Paperspace Inc., Jarvis Labs, Hyperstack Cloud, Lambda Labs Inc., Akash Network, NodeGoAI, Neysa, and RunPod Inc.Global Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Trends and Insights

Major companies operating in the graphics processing unit (GPU) pooling for large language models (LLMs) market are focusing on integration with token-aware load balancing, such as GPU resource virtualization advancement, to gain higher GPU utilization, improved inference efficiency, reduced operational costs, and scalable multi-model deployment capabilities. GPU resource virtualization advancement refers to the use of software-defined techniques to abstract, partition, and dynamically allocate GPU resources across multiple large language models (LLMs) and users. For instance, in October 2025, Alibaba Cloud, a China-based company, introduced Aegaeon, a multi-model GPU pooling solution that enables multiple LLMs to be served concurrently on shared GPU resources, significantly improving utilization efficiency. Developed by Alibaba Cloud, Aegaeon uses token-level scheduling to dynamically allocate GPU compute power based on real-time inference demand. Its architecture combines a proxy layer, GPU pool, and intelligent memory manager to reduce idle GPU time caused by low-traffic models. The system addresses the challenge of LLM proliferation, where most models receive infrequent requests but still consume dedicated resources.What Are Latest Mergers And Acquisitions In The Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market?

In December 2024, NVIDIA Corporation, a US-based technology company, acquired Run:AI for an undisclosed amount. With this acquisition, NVIDIA aims to strengthen its artificial intelligence (AI) infrastructure and software stack by integrating Run:ai’s expertise in graphics processing unit (GPU) orchestration, pooling, and workload management by enhancing its ability to optimize graphics processing unit (GPU) utilization and efficiency for large-scale artificial intelligence (AI) workloads, including training and inference for large language models. Run:AI is an Israel-based company specializing in Kubernetes-based graphics processing units (GPUs) orchestration and resource optimization software that enables dynamic pooling and efficient allocation of graphics processing units (GPU) compute for artificial intelligence (AI) and machine learning workloads.Regional Insights

North America was the largest region in the graphics processing unit (GPU) pooling for large language models (LLMs) market in 2025. Asia-Pacific is expected to be the fastest-growing region in the forecast period. The regions covered in this market report are Asia-Pacific, South East Asia, Western Europe, Eastern Europe, North America, South America, Middle East, Africa. The countries covered in this market report are Australia, Brazil, China, France, Germany, India, Indonesia, Japan, Taiwan, Russia, South Korea, UK, USA, Canada, Italy, Spain.What Defines the Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market?

The graphics processing unit (GPU) pooling for large language models (LLMs) market consists of revenues earned by entities by providing services such as graphics processing unit (GPU) allocation management, performance optimization, and resource monitoring. The market value includes the value of related goods sold by the service provider or included within the service offering. The graphics processing unit (GPU) pooling for large language models (LLMs) market includes sales of shared graphics processing unit (GPU) pooling, dedicated graphics processing unit (GPU) pooling and on-demand graphics processing unit (GPU) pooling. Values in this market are ‘factory gate’ values, that is, the value of goods sold by the manufacturers or creators of the goods, whether to other entities (including downstream manufacturers, wholesalers, distributors, and retailers) or directly to end customers. The value of goods in this market includes related services sold by the creators of the goods.How is Market Value Defined and Measured?

The market value is defined as the revenues that enterprises gain from the sale of goods and/or services within the specified market and geography through sales, grants, or donations in terms of the currency (in USD unless otherwise specified). The revenues for a specified geography are consumption values that are revenues generated by organizations in the specified geography within the market, irrespective of where they are produced. It does not include revenues from resales along the supply chain, either further along the supply chain or as part of other products.What Key Data and Analysis Are Included in the Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Report 2026?

The graphics processing unit (gpu) pooling for large language models (llms) market research report is one of a series of new reports from The Business Research Company that provides market statistics, including industry global market size, regional shares, competitors with the market share, detailed market segments, market trends and opportunities, and any further data you may need to thrive in the graphics processing unit (gpu) pooling for large language models (llms) industry. The market research report delivers a complete perspective of everything you need, with an in-depth analysis of the current and future state of the industry.Graphics Processing Unit (GPU) Pooling for Large Language Models (LLMs) Market Report Forecast Analysis

| Report Attribute | Details |

|---|---|

| Market Size Value In 2026 | $3.11 billion |

| Revenue Forecast In 2035 | $8.11 billion |

| Growth Rate | CAGR of 26.8% from 2026 to 2035 |

| Base Year For Estimation | 2025 |

| Actual Estimates/Historical Data | 2020-2025 |

| Forecast Period | 2026 - 2030 - 2035 |

| Market Representation | Revenue in USD Billion and CAGR from 2026 to 2035 |

| Segments Covered | Component, Deployment Mode, Enterprise Size, Application, End-User |

| Regional Scope | Asia-Pacific, Western Europe, Eastern Europe, North America, South America, Middle East, Africa |

| Country Scope | The countries covered in the report are Australia, Brazil, China, France, Germany, India, ... |

| Key Companies Profiled | Microsoft Corporation, Amazon Web Services Inc., International Business Machines Corporation, Oracle Corporation, CoreWeave Inc., DigitalOcean Inc., Cyfuture AI, NVIDIA Corporation, Vast.ai, GMI Cloud, Nebius Group N.V., Salad Technologies Inc., Vultr Holdings LLC, Hivenet, AceCloud Hosting Pvt. Ltd., Paperspace Inc., Jarvis Labs, Hyperstack Cloud, Lambda Labs Inc., Akash Network, NodeGoAI, Neysa, and RunPod Inc. |

| Customization Scope | Request for Customization |

| Pricing And Purchase Options | Explore Purchase Options |